Visual Intelligence

- Department Image analysis and Earth observation

- Fields involved Deep learning for complex image data, Marine image analysis

NR is part of Visual Intelligence, a Centre for Research-Based Innovation (SFI) that specialises in the use of artificial intelligence for complex image data.

In Visual Intelligence, we explore the next generation of deep learning for visual data, and develop practical solutions in close collaboration with our partners in the consoritium. Applications include medicine and healthcare, marine science, energy and Earth observation.

The next generation of methods for deep learning of image data

We develop solutions based on deep learning for visual data, working in close collaboration with leading industry partners across domains.

Our research particularly targets four key challenges in the field:

- How to address problems with limited training data

- How to incorporate information about context and dependencies

- How to develop models and methods that can estimate confidence and uncertainty

- How to design methods that provide explainable and trustworthy predictions

Interdisciplinary method development with broad applications

Interdisciplinary method development makes our research relevant in many different areas. By working methodically across domains, we create solutions that can be applied in diverse contexts. Our methods foster synergies between disciplines and generate value for both academia and industry.

Collaboration with research groups and industry partners is central to our work, and our research activities reflect this commitment.

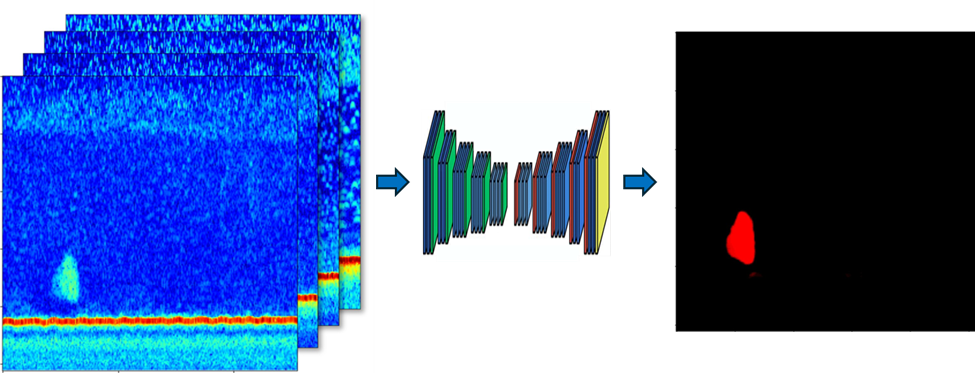

Marine image analysis

We work with The Institute for Marine Research (IMR) to analyse different types of image data, including marine acoustics, aerial and microscopic images. Our objective is to improve work in monitoring, stock assessment and general understanding of marine ecosystems.

Our work includes:

- developing models that detect and classify fish using acoustic data – a crucial part of estimating fish stock

- developing models that count seal pups in aerial images on ice-covered landmass in Greenland

- developing hierarchical models for classification of various types of plankton in microscopic images

To learn more about our work in Visual Intelligence, please contact:

Research partners:

- UiT The Arctic University of Norway, Machine Learning Group (host)

- The University of Oslo, Digital Signal Processing and Image Analysis (DSB)

User partners:

- The Cancer Registry of Norway

- GE Healthcare

- The University Hospital of North Norway (UNN)

- Northern Norway Regional Health Authority

- The Institute of Marine Research

- Equinor

- AkerBP

- Kongsberg Satellite Services (KSAT)

Period: 2020 – 2028

Further resources:

Visual Intelligence (external page)

Visual Intelligence on Linkedin (external page)

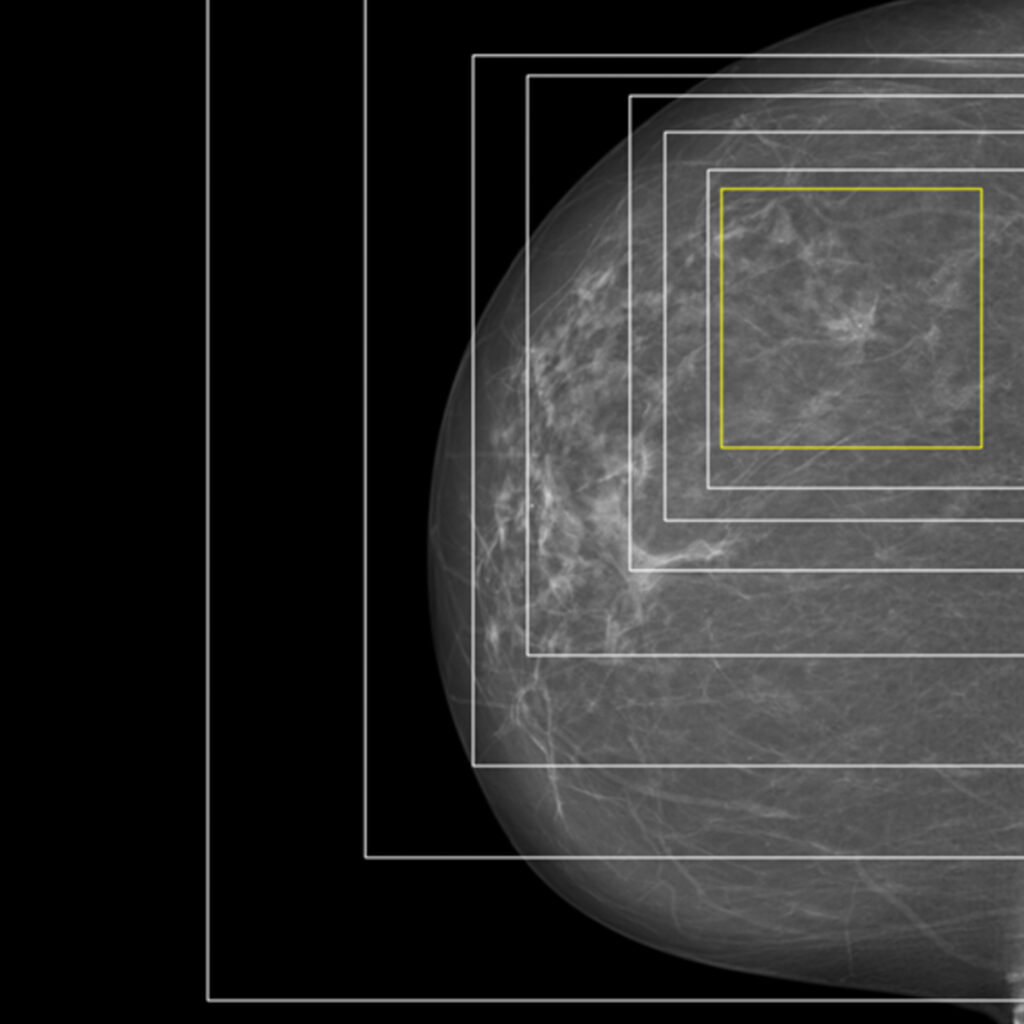

Medical image analysis

We develop methods for medical image analysis that support diagnostics and clinical decision-making.

Together with the Cancer Registry of Norway, we are creating analytical methods for mammograms. By using explainable artificial intelligence, we can highlight the areas of an image where a cancerous tumour is located, providing both insight and support for further medical assessment.

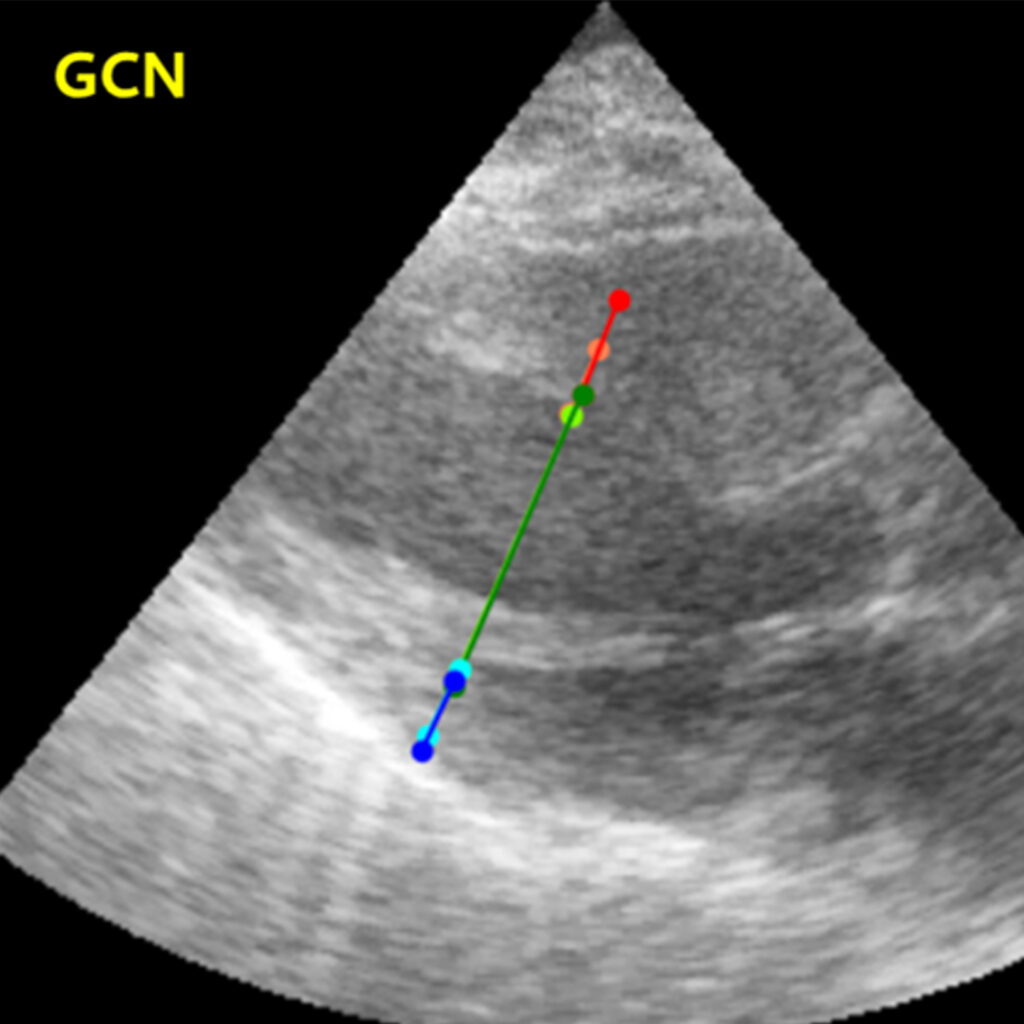

Another important collaboration is with GE Healthcare, where we analyse image sequences from cardiac ultrasounds. Here, we apply graph convolution networks to identify measuring points and model how they relate to each other,

We are also exploring the potential of foundation models in this field, particularly their ability to reduce the need for time-consuming manual annotation.

Energy

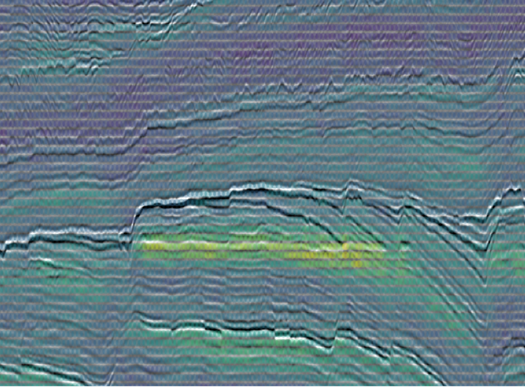

In collaboration with Equinor and Aker BP, we are developing a foundation model for seismic data.

Since collecting large amounts of complete training data in this field is particularly demanding, a model that reduces this requirement is especially valuable.

Building on this, we have developed an interactive solution for seismic data interpretation.

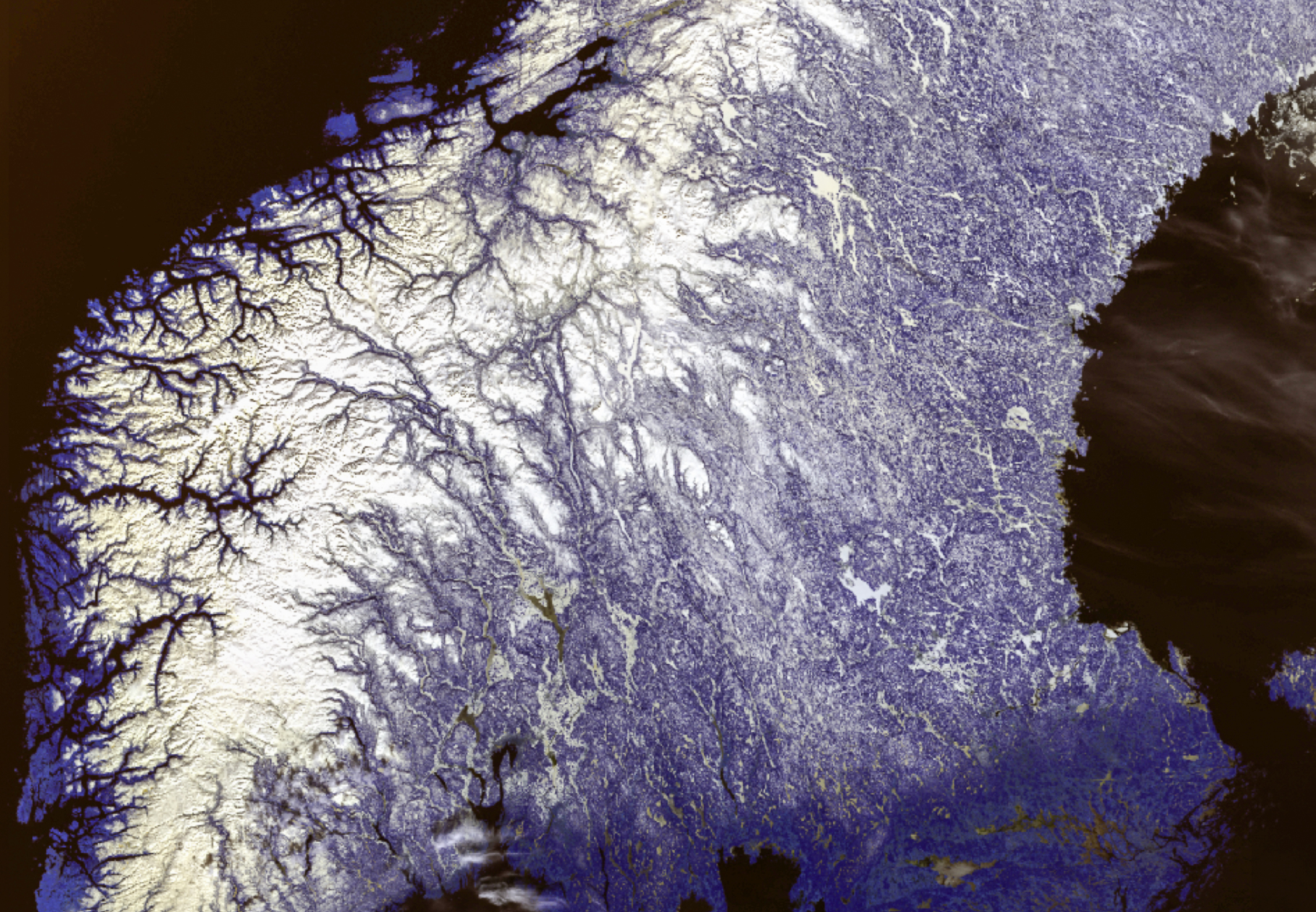

Earth observation

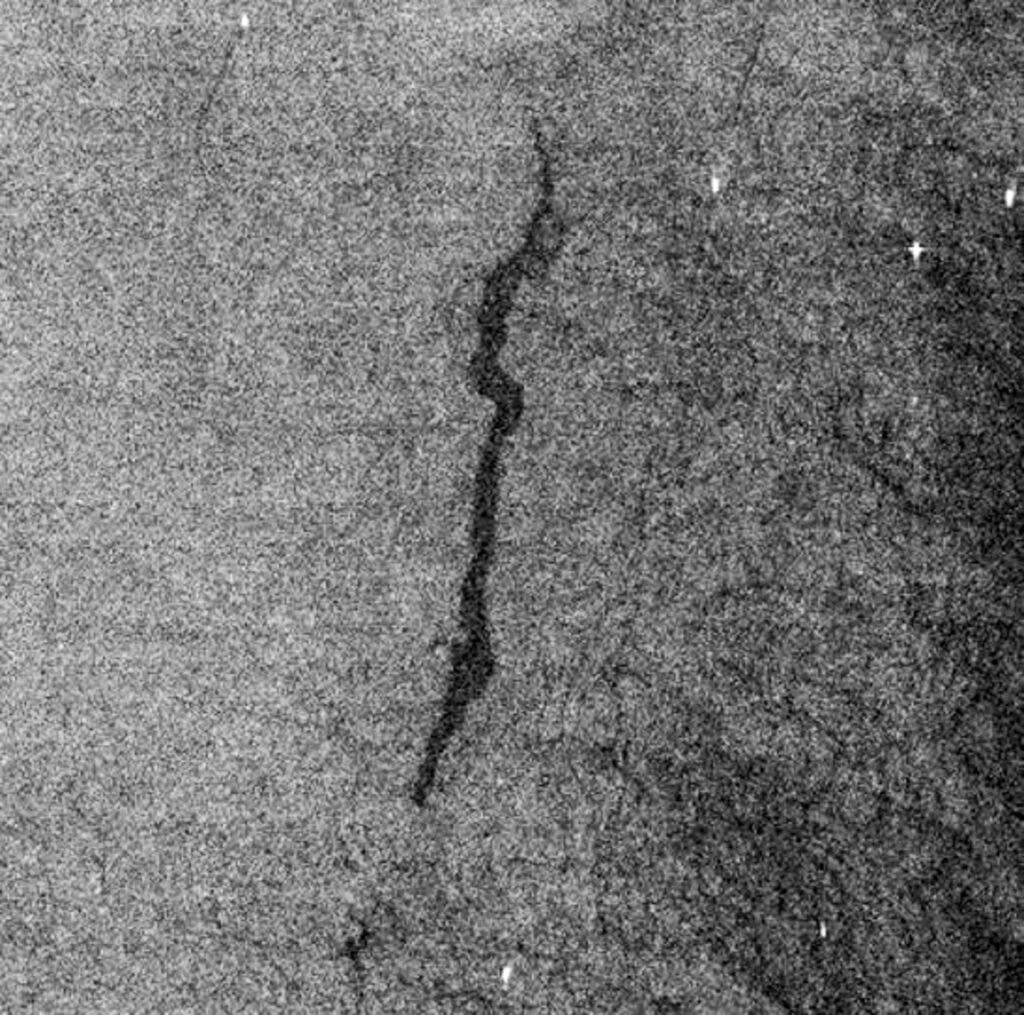

Together with Kongsberg Satellite Services (KSAT), we are exploring the use of foundation models for Earth observation to detect and map marine oil spills, based on data from radar satellites.

An important part of this work is integrating contextual information, such as wind speed and direction, since these data improve model performance and make the analyses more precise and reliable.

Selected projects

Our goal is to develop methods and tools that remain applicable and can be further advanced beyond the centre’s conclusion in 2028. Explore our projects below.